Neural Stochastic Processes

for Satellite Precipitation Refinement

Under Review

Currently, the paper is under review and we will set the links once it is published. For now, our code and a static demo are provided as anonymous supplementary materials.

Abstract

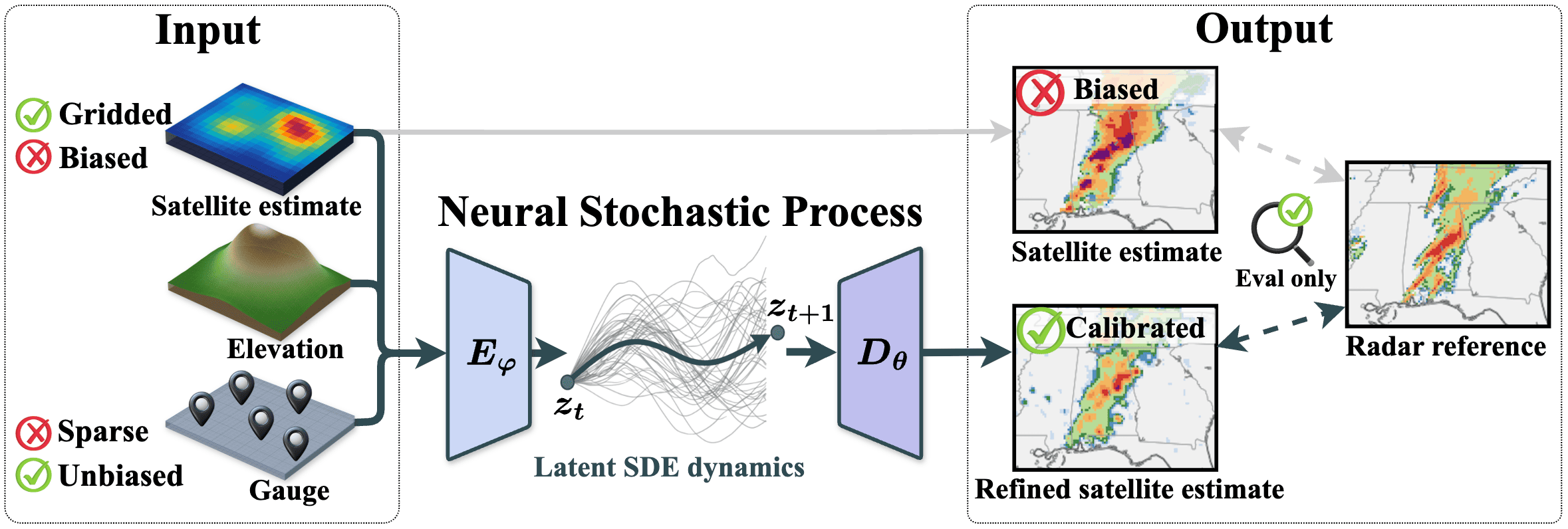

Accurate precipitation estimation is critical for flood forecasting, water resource management, and disaster preparedness. Satellite products provide global hourly coverage but contain systematic biases; ground-based gauges are accurate at point locations but too sparse for direct gridded correction. Existing methods fuse these sources by interpolating gauge observations onto the satellite grid, but treat each time step independently and therefore discard temporal structure in precipitation fields.

We propose Neural Stochastic Process (NSP), a model that pairs a Neural Process encoder conditioning on arbitrary sets of gauge observations with a latent Neural SDE on a 2D spatial representation. NSP is trained under a single variational objective with simulation-free cost. We also introduce QPEBench, a public benchmark of 43,756 hourly samples over the Contiguous United States (2021–2025) with four aligned data sources and six evaluation metrics. On QPEBench, NSP outperforms thirteen baselines across all six metrics and surpasses JAXA’s operational gauge-calibrated product.

Overview

-

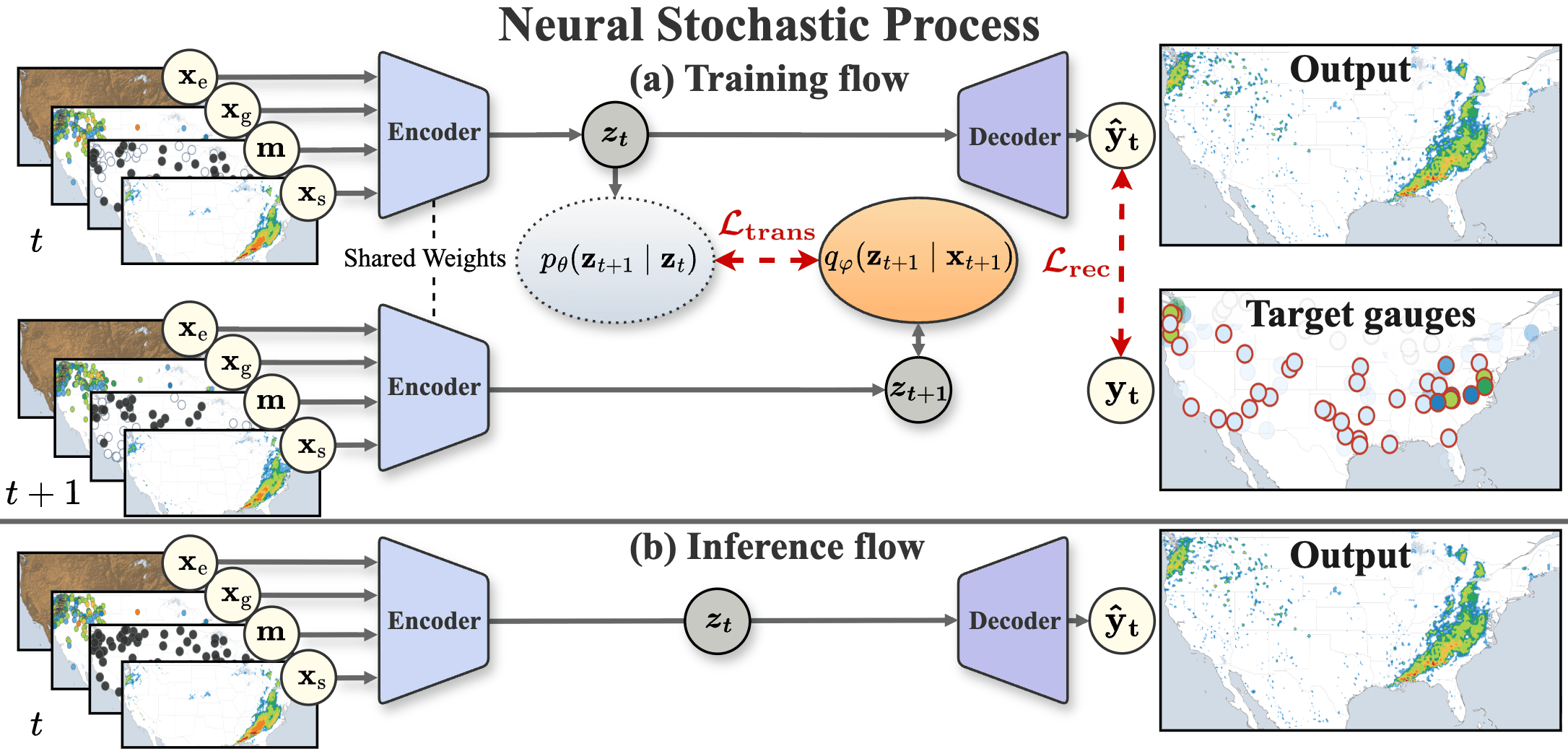

Sparse-context encoder

For each hour, the encoder ingests the satellite estimate, elevation, and an arbitrary, time-varying set of gauge observations encoded as a sparse map and a binary mask. It outputs a spatially structured Gaussian posterior over a 2D latent field, enabling a single model to accommodate the irregular gauge network without architectural changes.

-

Latent Neural SDE prior

A convolutional Neural SDE defines the latent prior transition. Training uses a closed-form transition KL derived from Girsanov’s theorem, so no SDE solver calls are required. The SDE acts as a temporal regulariser at training time and is bypassed at inference for efficiency.

-

Residual decoder

The decoder predicts a residual correction in log-precipitation space and a heteroscedastic predictive variance, conditioned on the latent field, the satellite estimate, and elevation. The refined precipitation field is then obtained by applying the residual on top of the satellite prior.

Benchmark

QPEBench combines four aligned data sources on a common 260×590 grid at 0.1° resolution (~11 km), spanning five years (January 2021 – December 2025). The benchmark covers the contiguous United States with hourly samples and an independent radar reference that is strictly excluded from training.

-

Satellite (GSMaP MVK)

JAXA’s GSMaP MVK product provides the gauge-uncorrected hourly satellite precipitation estimate. We chose GSMaP over IMERG because the MVK and gauge-calibrated GC variants share the same retrieval algorithm, enabling a controlled comparison of gauge fusion methods under the same satellite baseline.

-

Elevation (ETOPO 2022)

Elevation is taken from ETOPO 2022 and resampled to the common 0.1° grid. It is concatenated with the satellite and gauge inputs at every time step.

-

Gauge observations (Synoptic Data API)

Hourly reports from 11,879 unique stations are obtained through the Synoptic Data API, totalling more than 423 million records. After quality filtering, an average of about 7,300 gauges per hour are retained.

-

Radar reference (NOAA MRMS)

NOAA’s Multi-Radar Multi-Sensor (MRMS) Radar-Only QPE provides spatially dense, gauge-independent precipitation estimates and is strictly excluded from training. Models receive only satellite, elevation, and gauge inputs; the radar field serves as evaluation reference.

Results

Table 1 compares NSP against thirteen baselines on QPEBench. Mean ± standard deviation are reported over three-fold time-series cross-validation; the best score in each column is in bold. NSP achieves the best performance on all six metrics, including a 4.2% RMSEr reduction over GSMaP GC and a 39.1% RMSEg reduction over the second-best method, while preserving spatial structure as measured by FSSR.

Table 1. Quantitative comparison on the CONUS test folds.

| Method | RMSEr ↓ | MAEr ↓ | RMSEg ↓ | MAEg ↓ | rr,coll ↑ | FSSR ↑ |

|---|---|---|---|---|---|---|

| Quantile mapping | 4.073 ± 0.184 | 2.012 ± 0.063 | 0.885 ± 0.027 | 0.173 ± 0.006 | 0.288 ± 0.014 | 0.479 ± 0.010 |

| EMOS | 3.885 ± 0.127 | 1.844 ± 0.078 | 1.115 ± 0.096 | 0.179 ± 0.019 | 0.305 ± 0.013 | 0.478 ± 0.029 |

| XGBoost | 3.741 ± 0.129 | 1.827 ± 0.077 | 1.062 ± 0.088 | 0.179 ± 0.020 | 0.284 ± 0.016 | 0.487 ± 0.008 |

| GWR | 3.739 ± 0.230 | 1.627 ± 0.081 | 0.662 ± 0.021 | 0.155 ± 0.004 | 0.376 ± 0.016 | 0.229 ± 0.017 |

| Cokriging | 3.706 ± 0.121 | 1.789 ± 0.069 | 1.065 ± 0.081 | 0.220 ± 0.015 | 0.279 ± 0.016 | 0.461 ± 0.009 |

| GSMaP | 3.638 ± 0.126 | 1.826 ± 0.076 | 1.017 ± 0.086 | 0.179 ± 0.020 | 0.288 ± 0.014 | 0.483 ± 0.014 |

| Kriging | 3.204 ± 0.076 | 1.645 ± 0.027 | 0.774 ± 0.022 | 0.177 ± 0.005 | 0.006 ± 0.003 | 0.007 ± 0.002 |

| ConvCNP | 3.135 ± 0.098 | 1.596 ± 0.046 | 0.684 ± 0.018 | 0.100 ± 0.003 | 0.475 ± 0.005 | 0.053 ± 0.011 |

| U-Net | 3.123 ± 0.057 | 1.563 ± 0.038 | 0.666 ± 0.028 | 0.100 ± 0.004 | 0.461 ± 0.021 | 0.064 ± 0.052 |

| CNP | 3.115 ± 0.077 | 1.575 ± 0.056 | 0.699 ± 0.033 | 0.116 ± 0.006 | 0.340 ± 0.018 | 0.014 ± 0.011 |

| ViT | 3.059 ± 0.108 | 1.530 ± 0.079 | 0.687 ± 0.022 | 0.116 ± 0.016 | 0.411 ± 0.003 | 0.118 ± 0.019 |

| IDW | 2.987 ± 0.070 | 1.474 ± 0.020 | 0.645 ± 0.018 | 0.145 ± 0.004 | 0.352 ± 0.010 | 0.157 ± 0.002 |

| Linear regression | 2.967 ± 0.064 | 1.457 ± 0.020 | 0.709 ± 0.019 | 0.170 ± 0.005 | 0.299 ± 0.017 | 0.189 ± 0.012 |

| GSMaP GC | 2.942 ± 0.085 | 1.473 ± 0.039 | 0.737 ± 0.039 | 0.147 ± 0.007 | 0.375 ± 0.022 | 0.490 ± 0.023 |

| NSP (Ours) | 2.818 ± 0.062 | 1.444 ± 0.026 | 0.393 ± 0.047 | 0.076 ± 0.013 | 0.478 ± 0.021 | 0.527 ± 0.022 |

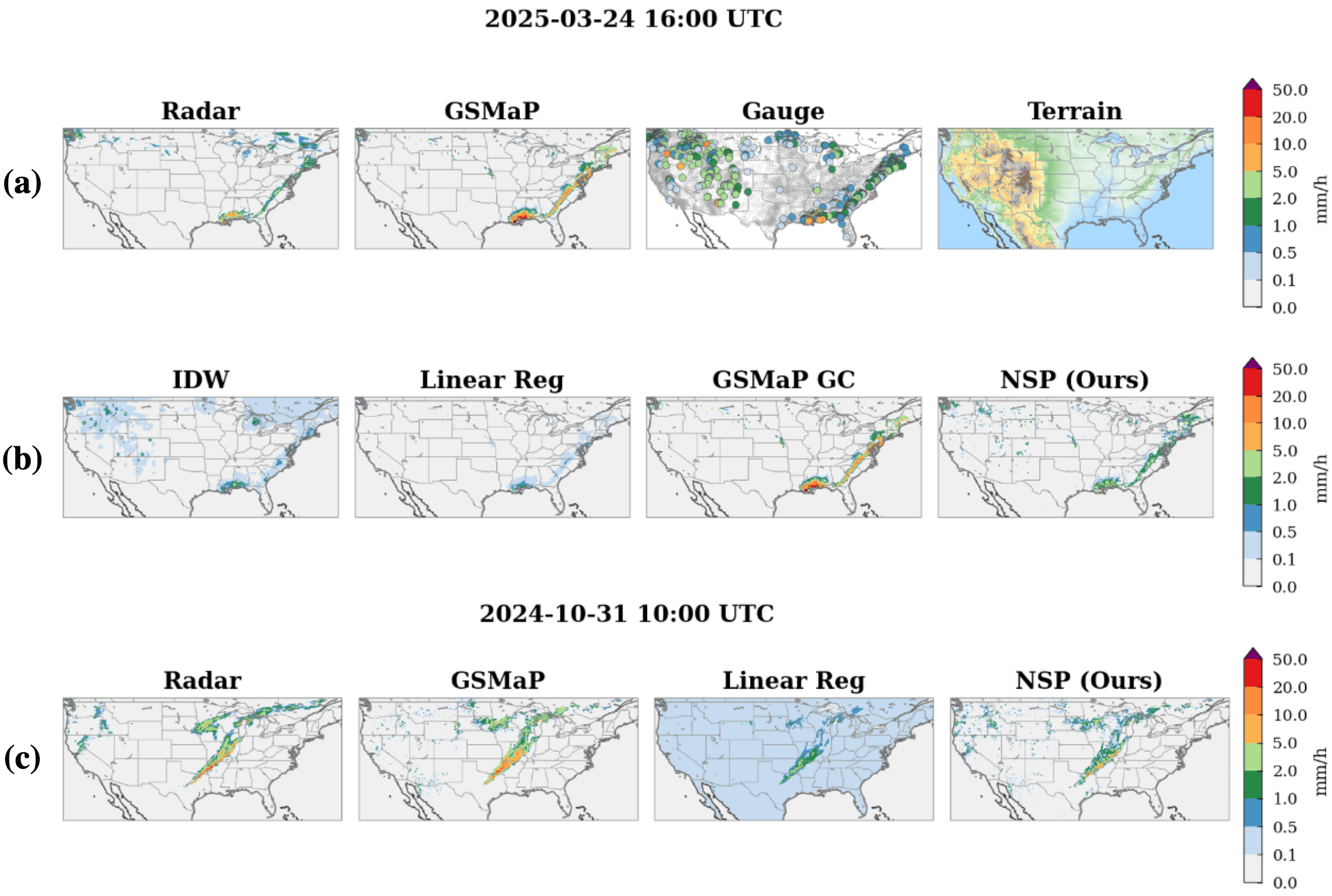

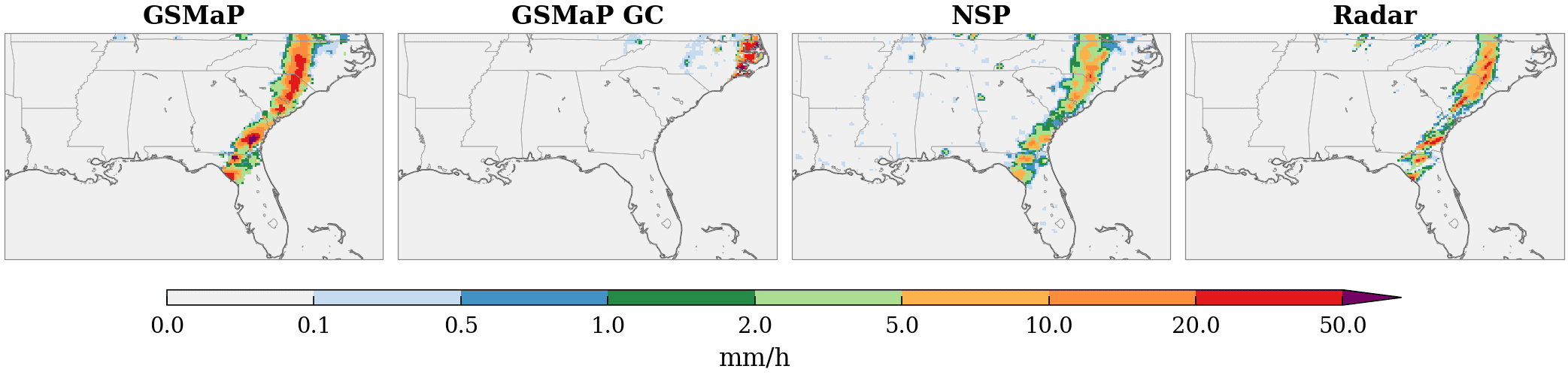

Qualitative Results

Interactive Demo

The static demo lets reviewers browse the March 2025 CONUS test month at six-hour cadence. Each snapshot shows real model inputs and outputs used in the supplementary material: GSMaP MVK, ETOPO 2022 elevation, the NSP refined field, and the MRMS radar reference. The gauge channel that the model also consumes is not shown or shipped — the Synoptic Data API terms of service prohibit redistribution of the underlying station readings.

BibTeX

@inproceedings{anon2026nsp,

title = {Neural Stochastic Processes for Satellite Precipitation Refinement},

author = {Anonymous},

note = {Under review},

year = {2026}

}A final citation entry will be released once review is complete.